The Hypothesis Defense of Human Non-Determinism Based on LLM Determinism

Introduction

If you ask a calculator what 1 + 1 is, it will tell you 2. If you ask it a million times, it will tell you 2 a million times. We expect computers to be logic engines — perfect, repeatable, and deterministic.

When we build Large Language Models (LLMs), we assume they follow the same rules. If we set the "Temperature" to 0 (telling the model to always pick the most likely next word), we expect the exact same essay every time we run the prompt.

But if you have worked with LLMs in production, you know they don't.

Even with the randomness turned off, LLMs often give different answers to the same question depending on when you ask them. Why? The answer lies deep in the "physics" of computer chips, and it offers a fascinating mirror to the "physics" of the human mind.

The Butterfly Effect in the GPU

To understand why AI changes its mind, we have to look at the atomic unit of AI labor: The Kernel.

A "Kernel" is a small, highly optimized program running on a GPU (Graphics Processing Unit) that performs a specific mathematical task, like multiplying matrices. (I am sure that readers are mostly from a CS background, so you know Kernels exist outside of GPUs as well, but here we focus on AI hardware).

In a recent deep-dive, Thinking Machines Lab uncovered the root cause of AI non-determinism. It isn't a ghost in the machine; it's a conflict between math and speed.

The "Chef" Analogy

Imagine a chef (the Kernel) making a soup.

Scenario A: He is cooking for just one customer. He chops carrots, then onions, then celery, and throws them in the pot.

Scenario B: He is cooking for 100 customers at once (a "Batch"). To be faster, he changes his strategy. He chops 100 carrots all at once, then 100 onions.

The ingredients are the same. The amounts are the same. But the order in which they were added to the pot changed.

Based on the workload of the GPU, the Kernel can change the algorithm of parallel processing and prioritization. Basically, in production environments, it is unlikely we can predict the exact execution path (Check the referenced paper for detailed examples [1]).

(0.1 + 1e20) — 1e20

>>> 0

0.1 + (1e20–1e20)

>>> 0.1

These "small" rounding errors have massive consequences in deep neural networks. It is not just about calculation load; it is about error propagation.

That tiny floating-point error ripples through the billions of parameters and hundreds of layers in the model. Eventually, it flips a 49.9% probability token to a 50.1% probability token. The model chooses a different word. Once that word changes, the context for the next word changes, and suddenly, the whole essay is different.

The Verdict: LLM non-determinism is an engineering artifact. It can be fixed (by forcing the Kernel to calculate the exact same way regardless of batch size), but usually, we don't fix it because we prefer speed over perfect repetition.

The Human Kernel — The Unsolvable Non-Determinism

If the most precise logic machines we have ever built (GPUs) suffer from "noise" due to their physical substrate, what does that imply for the human brain?

We are, after all, neural networks running on hardware. Just built with meat instead of metal. But here, the parallel splits.

1. The Hardware: Thermal Noise

The AI Kernel suffers from Floating Point Noise. It is "deterministic chaos" — if we could control every electron and every scheduler perfectly, we could predict the outcome.

The Human Kernel, however, suffers from Fundamental Physics. We lack a deterministic map at the atomic level. Biological neurons operate at a nanoscale where Brownian motion (random thermal fluctuation) dominates. Whether a neurotransmitter vesicle releases or not isn't just a matter of inputs; it is subject to the fundamental randomness of the universe (and potentially the Heisenberg uncertainty principle).

We cannot "fix" this noise because the noise is the medium itself.

2. The Software: An RL Agent in a Stochastic World

Functionally, the human architecture resembles a high-level Reinforcement Learning (RL) algorithm attached to a reasoning engine (the cortex).

- The RL Component: Our basal ganglia functions like a Value Function, predicting rewards (dopamine) and penalizing errors.

- The Reasoning Component: Our cortex acts like an LLM — but a dynamic one. Unlike a frozen model, it is training and inferencing simultaneously, with a massive context window, planning future steps based on those rewards.

But here is the catch: An AI agent usually learns in a fixed environment (like a chess board or a physics simulation). Humans learn in a Non-Deterministic environment.

We face a "Double Noise" problem. First, our internal hardware (neurons) has a "Temperature" that can never be set to 0 due to thermal noise. Second, the external world we interact with is not a static dataset; it is a chaotic, non-deterministic stream of sensory data.

Conclusion: The Hypothesis Defense Of Human Non-Determinism

We explained how Thinking Machines Lab defeated LLM non-determinism. But we also show how this Non-Determinism is the proof of human Non-Determinism. We want them to be reliable tools. As the Thinking Machines [1] research shows, we can achieve this by enforcing "Batch Invariance" — making the machine behave identically no matter how busy the server is.

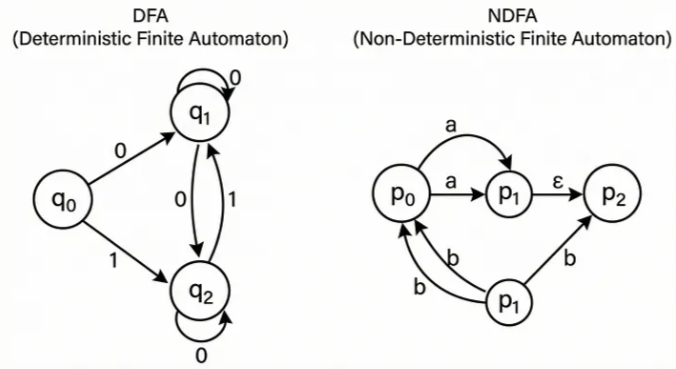

Human non-determinism is a default state. Humans, because of atomical level non-determinism at the structure and at the outside world, will not be defined like DFA (Deterministic Finite Automata) but with NDFA's (Non-Deterministic Finite Automata).

This also supports the theological thesis I discussed in my independent paper, 'A Proposal Theory for Independent Theology' [2].

Extras

If you want to check the topic in details, check the blog's main website.